The Meta Calls For Standardized Labeling Of AI-Generated Visual Content

- February 12, 2024

- allix

- AI in Business

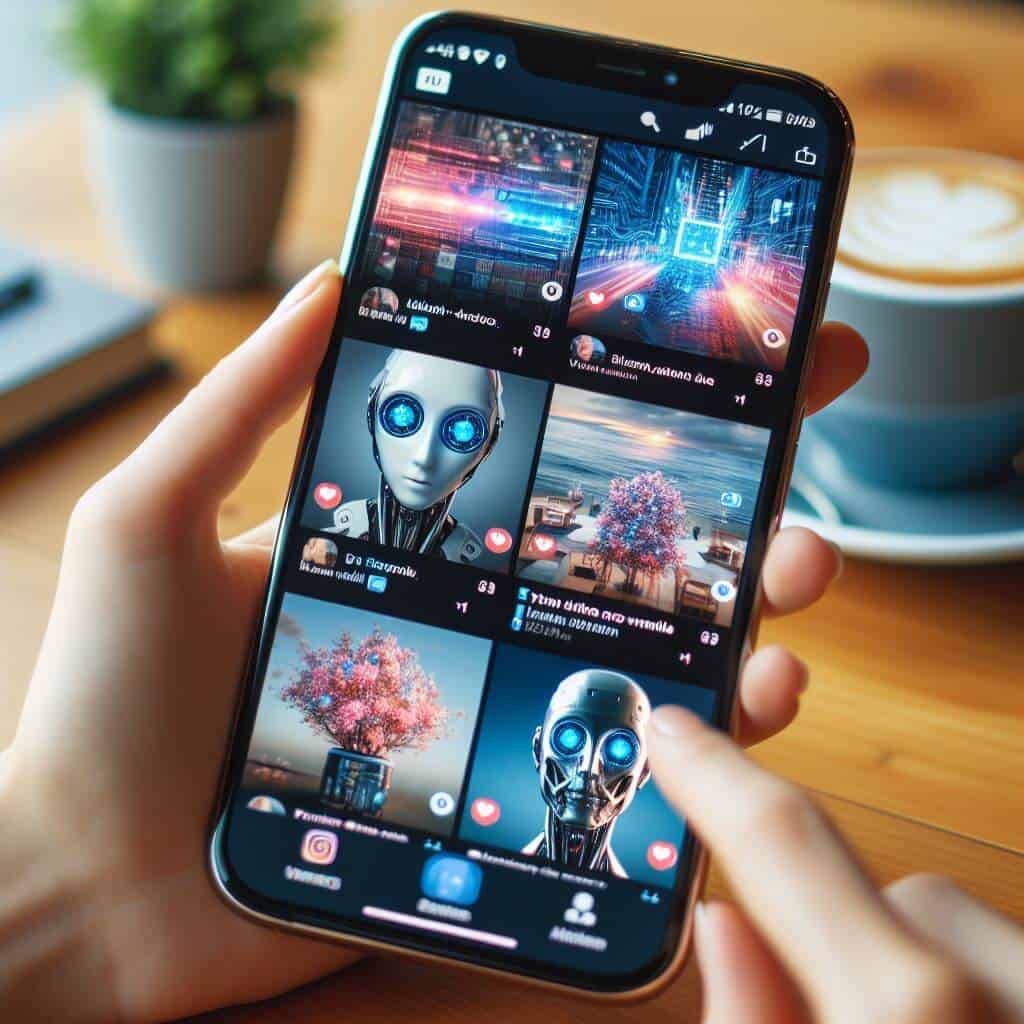

On Tuesday, Meta announced a collaboration with other technology companies to develop standards that will enable advanced detection and labeling of AI-generated images shared by a large user base.

The Silicon Valley-based tech giant expects to be ready to roll out a system on its platforms — Facebook, Instagram, and Threads — to accurately identify and tag AI-generated visuals within months. With upcoming elections in various countries that account for half of the world’s population, platforms like Meta feel an urgency to monitor AI-generated content due to concerns about the increased spread of misinformation by malicious actors. “This technology needs further development and it won’t cover everything, but it’s a start,” Nick Clegg, the company’s head of international affairs, told AFP in an interview.

Since December, Meta has marked images captured by its AI tools with visible and hidden indicators. However, Meta is looking to expand these efforts by partnering with outside companies to increase user awareness of such content, Clegg shared. Meta mentioned in one of their blog updates that they are looking to establish universal technical standards with industry peers that would signal when AI has had a hand in creating a piece of content. These efforts will involve engagement with organizations that Meta has previously worked with on AI recommendations. These partners include industry leaders such as OpenAI, Google, Microsoft, and Midjourney.

But as Clegg pointed out, while there is some progress in embedding “signals” into AI-generated images, the practice of tagging AI-generated audio or video has not progressed as quickly in the industry. While acknowledging that invisible tagging won’t completely eradicate the threat of fraudulent images, Clegg believes it will significantly reduce the distribution of such content as far as current technology allows.

Meanwhile, Meta encourages users to be skeptical of online content, assessing the reliability of sources and scrutinizing details that may seem contrived. In particular, high-ranking individuals and women have been affected by the realistic but false manipulations known as “deep fakes”. A notable case involved fake nude images of mega-pop star Taylor Swift that went viral on the platform formerly known as Twitter.

The development of AI tools capable of generating content has raised concerns about possible abuse, such as using ChatGPT for political upheaval through disinformation or duplicate AI. Just last month, OpenAI announced a ban on the use of its technology by political figures or organizations. Meta insists that advertisers be transparent about any AI involvement in the creation or editing of both visual and audio content in political ads.

Categories

- AI Education (39)

- AI in Business (65)

- AI Projects (87)

- Research (107)

- Uncategorized (5)

Other posts

- Medical Treatment in Brazil: Advanced Healthcare, Skilled Specialists, and Patient-Focused Care

- Dental Treatment in China: Modern Technology, Skilled Dentists, and Comprehensive Care for International Patients

- Plastic Surgery in China: Advanced Aesthetic Medicine Supported by Precision, Innovation, and Skilled Specialists

- Ophthalmology in China: Advanced Eye Care Guided by Innovation, Expertise, and Patient-Focused Treatment

- Finding Care, Calm, and Confidence: Why Patients Are Looking Toward Beroun in the Czech Republic

- Choosing Health, Energy, and a New Future: Exploring Gastric Bypass in Diyarbakır, Turkey

- When Facial Hair Tells Your Story: Considering a Beard Transplant in Phuket, Thailand

- When Prevention Becomes Power: Understanding Liver Cirrhosis Risk and Modern Screening Approaches in Spain

- When the Abdomen Signals Something Serious: Understanding Abdominal Aortic Aneurysm and Getting Expert Evaluation in Islamabad

- When Back Pain Becomes More Than “Just Pain”: Understanding the Need for Microdiscectomy

Newsletter

Get regular updates on data science, artificial intelligence, machine