- September 13, 2023

- allix

- Research

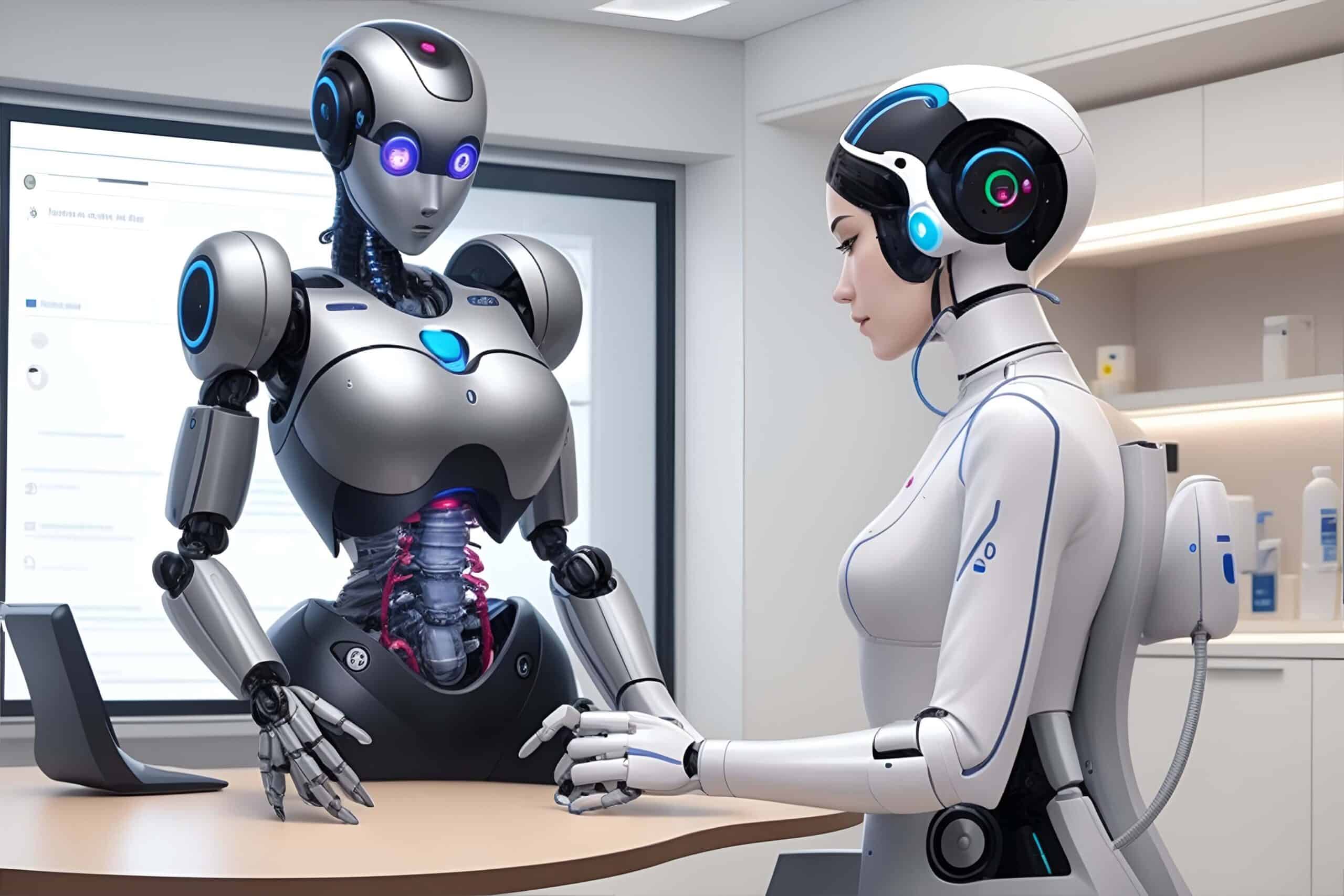

A recent study reveals that ChatGPT, an artificial intelligence chatbot, exhibits diagnostic capabilities comparable to human doctors when assessing emergency room patients. Dutch researchers assert that this advancement in AI could potentially revolutionize the medical field. It’s important to note that the report, released on Wednesday, emphasizes that ER physicians need not retire their scrubs just yet. While the chatbot may expedite the diagnostic process, it cannot replace the essential elements of human medical judgment and experience.

The research involved the examination of 30 cases treated at an emergency service in the Netherlands during 2022. Researchers provided ChatGPT with anonymized patient histories, laboratory test results, and doctors’ observations, requesting it to propose five potential diagnoses for each case. Subsequently, they compared the chatbot’s list of diagnoses with those suggested by ER doctors who had access to the same information. These diagnoses were then cross-checked against the correct diagnosis for each case.

In this comparative analysis, doctors accurately identified the correct diagnosis within the top five possibilities in 87% of cases. In contrast, ChatGPT version 3.5 achieved a 97% accuracy rate, while version 4.0 reached an 87% accuracy rate.

Hidde ten Berg, from the emergency medicine department at Jeroen Bosch Hospital in the Netherlands, summarized, “Simply put, this indicates that ChatGPT was able to suggest medical diagnoses much like a human doctor would.”

Co-author Steef Kurstjens stressed that this study does not foresee computers replacing ER personnel but rather sees AI as a valuable tool for aiding healthcare professionals under pressure. He explained, “The key point is that the chatbot doesn’t replace the physician but it can help in providing a diagnosis and it can maybe come up with ideas the doctor hasn’t thought of.”

Kurstjens also highlighted that large language models like ChatGPT are not intended as medical devices and raised concerns about the privacy implications of feeding confidential medical data into a chatbot.

The study also acknowledged some limitations. The sample size was relatively small, consisting of only 30 cases, and the research primarily focused on straightforward cases where patients presented a single primary complaint. The chatbot’s efficacy in handling complex or rare diseases remains unverified.

The chatbot exhibited some inconsistencies and medically implausible reasoning at times, which could lead to misinformation or incorrect diagnoses, as noted in the report.

Despite these challenges, the findings indicate that ChatGPT has the potential to save time and reduce waiting times in emergency departments. It could particularly assist less experienced doctors and aid in the detection of rare diseases. It is crucial to remember that ChatGPT is not a medical device, and privacy concerns persist when using it with medical data.

The findings, featured in the medical journal “Annals of Healthcare in Emergency Situations,” will be presented at the European Emergency Medicine Congress (EUSEM) 2023, taking place in Barcelona.

Categories

- AI Education (39)

- AI in Business (65)

- AI Projects (87)

- Research (107)

- Uncategorized (5)

Other posts

- Medical Treatment in Brazil: Advanced Healthcare, Skilled Specialists, and Patient-Focused Care

- Dental Treatment in China: Modern Technology, Skilled Dentists, and Comprehensive Care for International Patients

- Plastic Surgery in China: Advanced Aesthetic Medicine Supported by Precision, Innovation, and Skilled Specialists

- Ophthalmology in China: Advanced Eye Care Guided by Innovation, Expertise, and Patient-Focused Treatment

- Finding Care, Calm, and Confidence: Why Patients Are Looking Toward Beroun in the Czech Republic

- Choosing Health, Energy, and a New Future: Exploring Gastric Bypass in Diyarbakır, Turkey

- When Facial Hair Tells Your Story: Considering a Beard Transplant in Phuket, Thailand

- When Prevention Becomes Power: Understanding Liver Cirrhosis Risk and Modern Screening Approaches in Spain

- When the Abdomen Signals Something Serious: Understanding Abdominal Aortic Aneurysm and Getting Expert Evaluation in Islamabad

- When Back Pain Becomes More Than “Just Pain”: Understanding the Need for Microdiscectomy

Newsletter

Get regular updates on data science, artificial intelligence, machine