- September 22, 2023

- allix

- Research

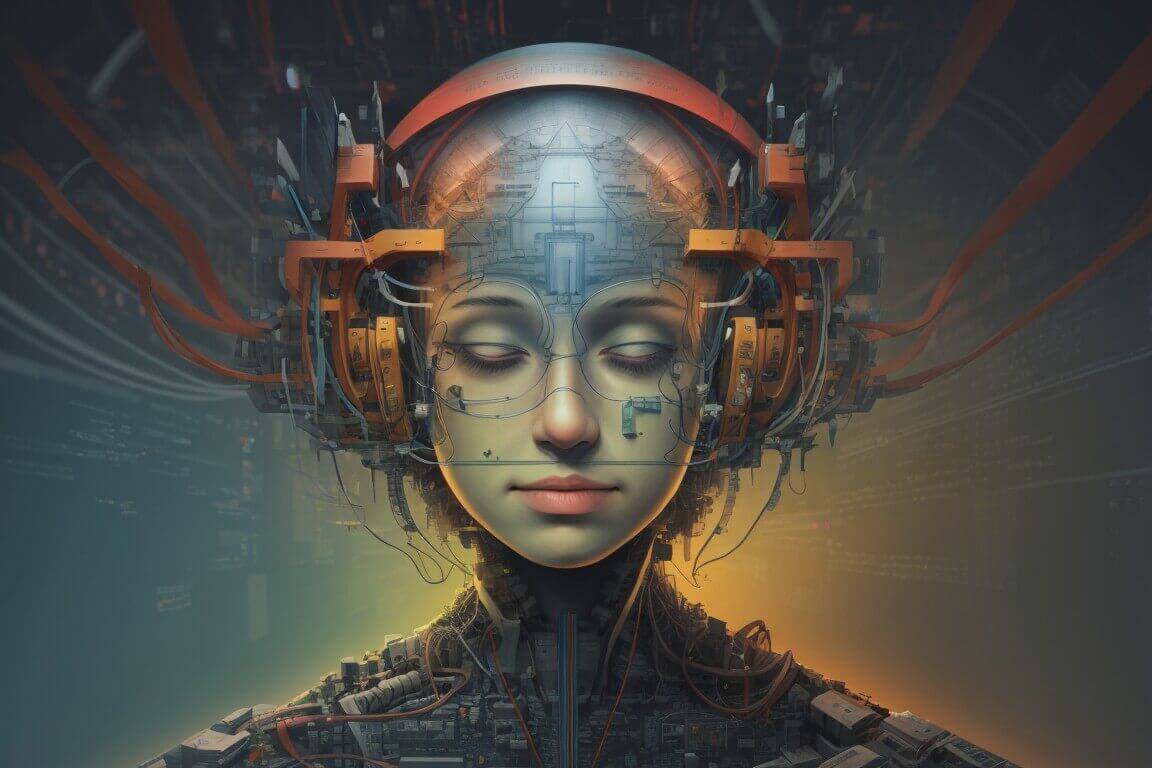

Image recognition is the process of teaching computers to identify and categorize objects or patterns in digital images or video frames. This ability is crucial for various AI applications, including facial recognition, autonomous driving, medical diagnosis, and more.

The history of image recognition algorithms has been marked by significant progress. In the early days, image recognition relied on traditional computer vision methods, which often struggled when dealing with complex and diverse datasets. However, the rise of deep learning, particularly CNNs, has completely transformed the field.

Convolutional Neural Networks, or CNNs, have gained prominence due to their outstanding performance in image recognition tasks. These neural networks draw inspiration from how our own visual system works, designed to spot patterns, features, and objects in images. CNNs consist of multiple layers, each with a specific role, making them highly effective at handling complex visual data. One remarkable application of CNNs is in the detection of handwritten digits, a critical component of optical character recognition (OCR) systems. Algorithms like LeNet-5 have been used to recognize handwritten digits in postal codes, checks, and forms. These algorithms break down the handwritten characters into smaller features and analyze them layer by layer, ultimately classifying the digits accurately.

The heart of CNNs is their convolutional layers. These layers use filters or kernels to analyze input images, searching for specific features. For example, in an image of a cat, one kernel might focus on detecting the edges, while another identifies the shape of the cat’s ear. Convolutional layers are skilled at extracting features from images, allowing the network to understand intricate details. After the convolutional layers, there are pooling layers, which reduce the data’s complexity. Common pooling methods include max-pooling and average-pooling, which downsize the feature maps while retaining important information and reducing computational load. Pooling layers help the network concentrate on the most critical features. The final layers in a CNN are fully connected layers. These layers perform the classification based on the extracted features. They connect all the neurons from the previous layers and make decisions about what the image contains. These layers are essential in providing the network’s output, indicating the image’s classification.

To make CNNs effective at image recognition, they need extensive training on large datasets. During training, the network is exposed to thousands or even millions of labeled images to learn the features and patterns associated with various objects. Backpropagation, a fundamental technique in neural network training, helps fine-tune the network’s parameters to reduce errors and improve accuracy.

Applications of CNNs in Image Recognition

The versatility and effectiveness of CNNs have led to their widespread use in numerous real-world applications. In healthcare, CNNs have proven invaluable for diagnosing diseases from medical images. Radiologists and doctors use these networks to detect anomalies in X-rays, MRIs, and CT scans. CNNs have shown remarkable accuracy in identifying conditions like cancer, fractures, and neurological disorders. For instance, in the field of dermatology, CNN-based algorithms are employed to detect skin cancers. By analyzing images of skin lesions, these algorithms can identify signs of malignancy, potentially aiding in early diagnosis and treatment.

CNNs play a crucial role in the development of self-driving cars. They help vehicles understand their surroundings by analyzing camera feeds and making real-time decisions. CNNs can detect pedestrians, road signs, other vehicles, and obstacles, making autonomous navigation safer and more reliable. In the context of traffic management, CNNs are used to monitor and optimize traffic flow. By analyzing live camera feeds from intersections, these algorithms can detect congestion, accidents, and traffic violations, facilitating efficient traffic control.

Security and surveillance systems benefit from CNNs’ ability to identify and track objects. Whether it’s monitoring crowded public spaces or securing private properties, these networks excel at recognizing individuals, vehicles, and suspicious activities. CNNs can trigger alerts when they detect unusual behavior, enhancing security measures.

In the agricultural sector, CNNs are used to monitor crop health and pest infestations. Drones equipped with cameras capture images of fields, and CNN-based algorithms analyze these images to identify areas of concern. This enables farmers to take timely actions to protect their crops and optimize yields.

CNNs are primarily associated with image recognition, they’ve found applications in natural language processing (NLP) too. In tasks like sentiment analysis, CNNs process textual data and extract meaningful features, improving understanding and interpretation of language.For instance, in the realm of social media analysis, CNNs are used to gauge public sentiment toward products or brands. By analyzing text-based social media posts and comments, these algorithms can determine whether the sentiment is positive, negative, or neutral, providing valuable insights for businesses.

In the domain of satellite image analysis, CNNs are employed to identify land cover types and changes over time. By employing pooling layers, these algorithms can discern significant features in large satellite images, such as bodies of water, urban areas, and agricultural fields. This aids in applications like urban planning and environmental monitoring.

Challenges and the Road Ahead

Despite their remarkable achievements, CNNs and image recognition algorithms face several challenges. One significant concern is the need for extensive labeled data for training, which can be time-consuming and costly to acquire. CNNs are often considered “black box” models, meaning their decision-making processes can be difficult to interpret. Researchers are actively working on techniques to make CNNs more transparent and explainable, ensuring their responsible use. The development of even more advanced image recognition algorithms is inevitable. These future algorithms may incorporate multimodal learning, combining visual and textual data for better understanding. Ongoing research will focus on making AI algorithms more efficient, requiring fewer computational resources and reducing energy consumption.

Categories

- AI Education (39)

- AI in Business (65)

- AI Projects (87)

- Research (107)

- Uncategorized (5)

Other posts

- Medical Treatment in Brazil: Advanced Healthcare, Skilled Specialists, and Patient-Focused Care

- Dental Treatment in China: Modern Technology, Skilled Dentists, and Comprehensive Care for International Patients

- Plastic Surgery in China: Advanced Aesthetic Medicine Supported by Precision, Innovation, and Skilled Specialists

- Ophthalmology in China: Advanced Eye Care Guided by Innovation, Expertise, and Patient-Focused Treatment

- Finding Care, Calm, and Confidence: Why Patients Are Looking Toward Beroun in the Czech Republic

- Choosing Health, Energy, and a New Future: Exploring Gastric Bypass in Diyarbakır, Turkey

- When Facial Hair Tells Your Story: Considering a Beard Transplant in Phuket, Thailand

- When Prevention Becomes Power: Understanding Liver Cirrhosis Risk and Modern Screening Approaches in Spain

- When the Abdomen Signals Something Serious: Understanding Abdominal Aortic Aneurysm and Getting Expert Evaluation in Islamabad

- When Back Pain Becomes More Than “Just Pain”: Understanding the Need for Microdiscectomy

Newsletter

Get regular updates on data science, artificial intelligence, machine